everchanging cities

Narrating Calvino's stories through VR experiences

Concept

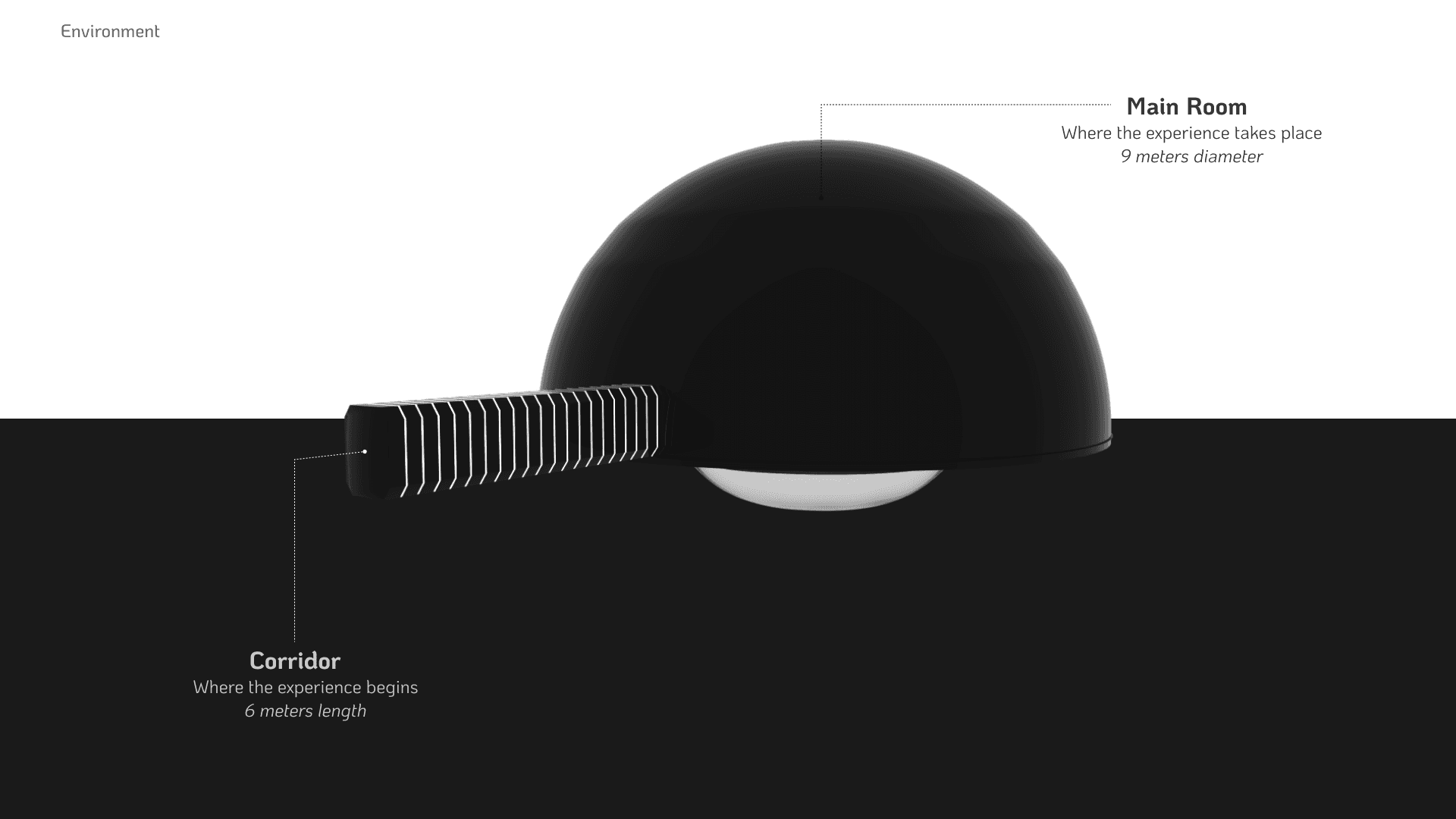

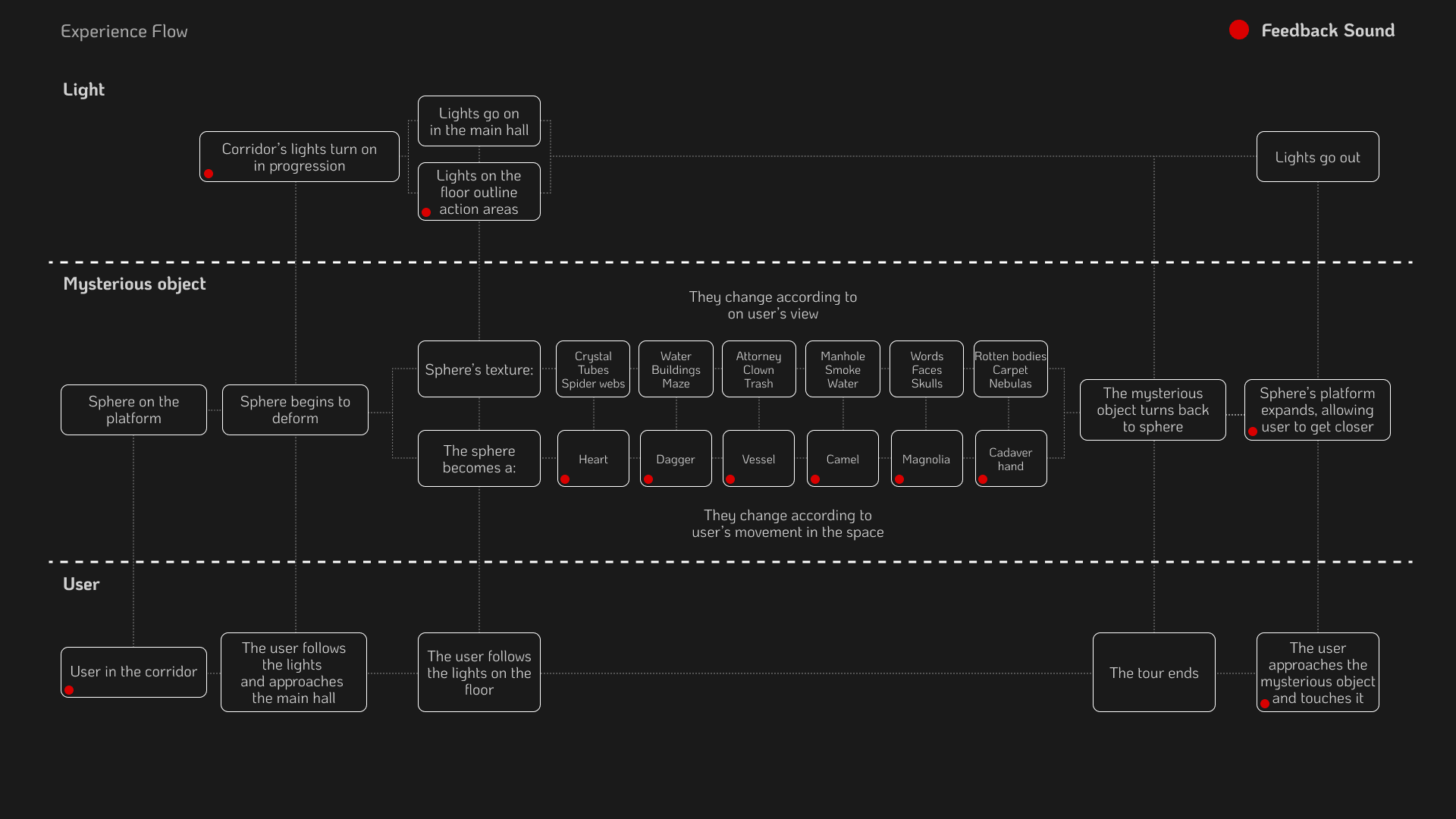

Everchanging Cities is a virtual reality experience that explores the themes of change and perspective through conceptual and physical metaphors. The interactive experience invites users to reflect on dissonant thoughts while discovering the narrative at their own pace.

Inspired by Italo Calvino's Invisible Cities, the experience

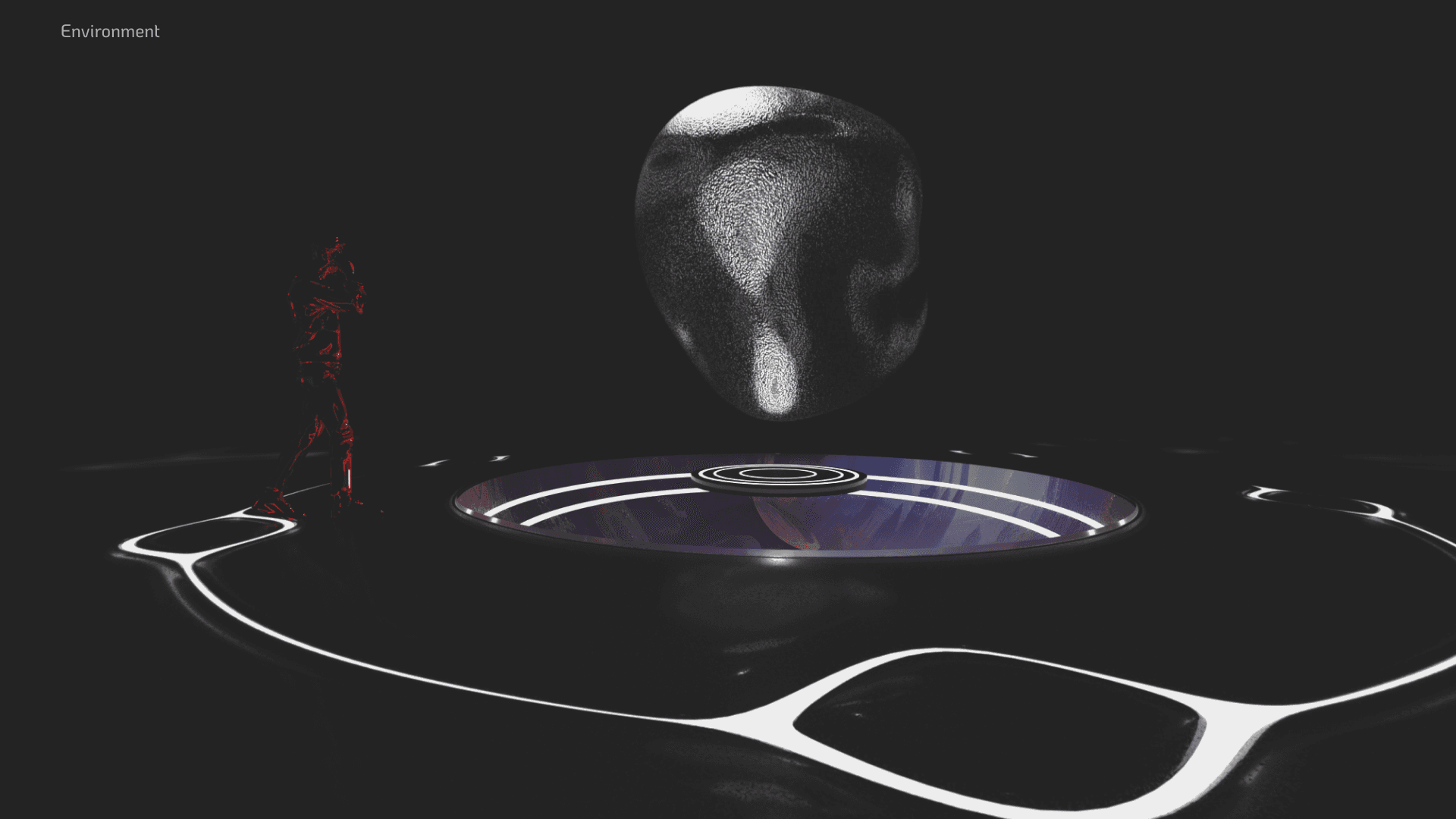

unfolds in a pitch-black room where a suspended object morphs as the user approaches. These transformations represent the essence of the cities, conveyed through objects and textures. The journey culminates in the user’s ability to finally interact with the mysterious object, deepening the story’s meaning.

Role

Interaction Designer

AI Artist

3D Artist

Collaborators

Alessio Brioschi

Andrea Lo Curto

Lisa Buttaroni

Lorenzo Castagna

Gianluca Zoni

tools

Figma

Unity

Blender

Houdini

Oculus SDK

MidJourney

Stable Diffusion

duration

4 weeks across a semester

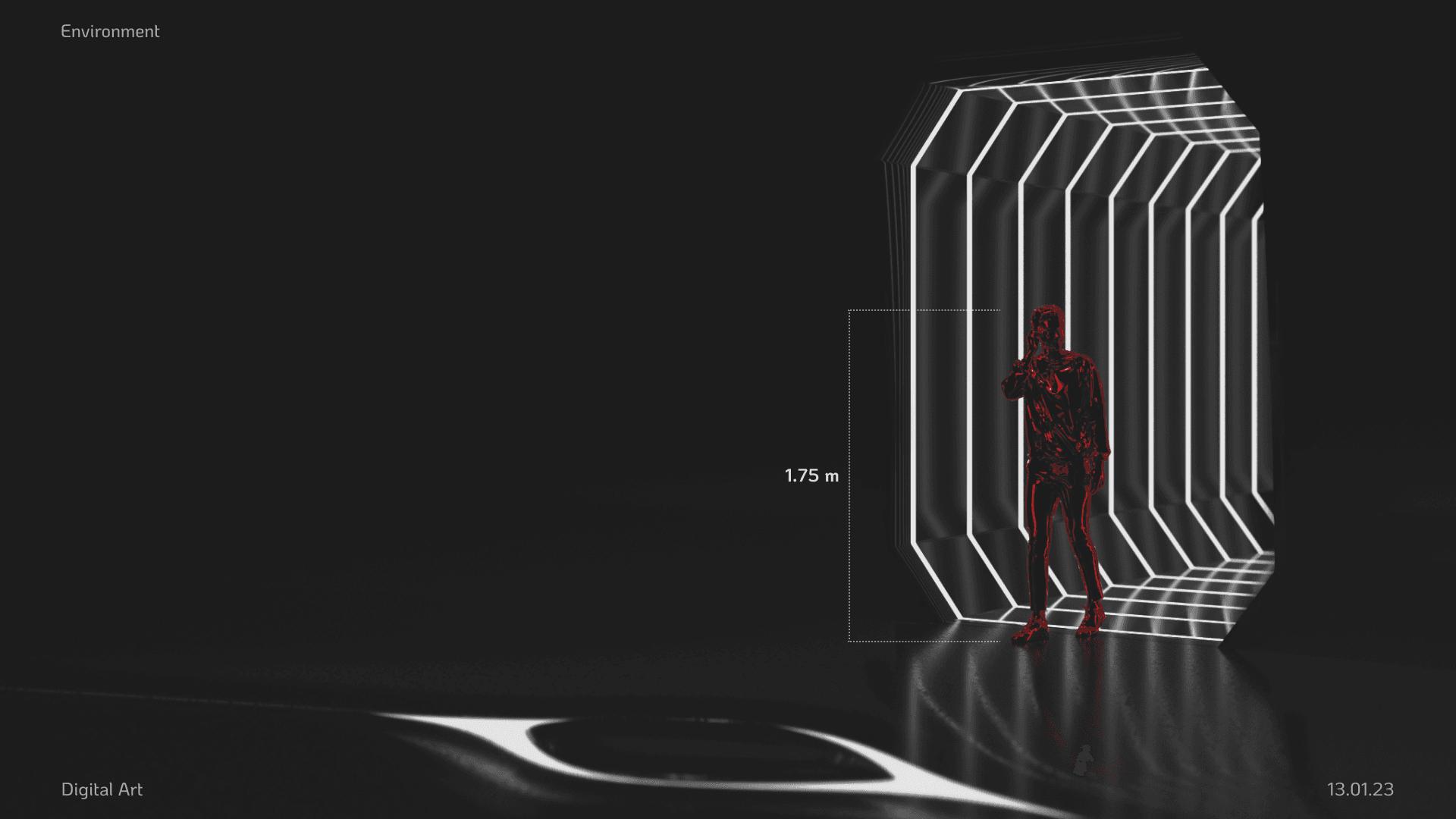

This interactive design project uses generative AI and VR to tell a story through metaphors and interactive elements, allowing users to explore at their own pace. Inspired by Italo Calvino’s Invisible Cities, the experience lets users uncover stories from the novel via commercial VR headsets, blending narrative with immersive technology.

The user finds himself in a pitch black room, with an object suspended in the center of it, when approaching it, the object starts morphing. The morph are meant to tell the story of the cities by their objects and their textures, in relation with each other. At the end of the experience the player will finally be able to touch the mysterious object.

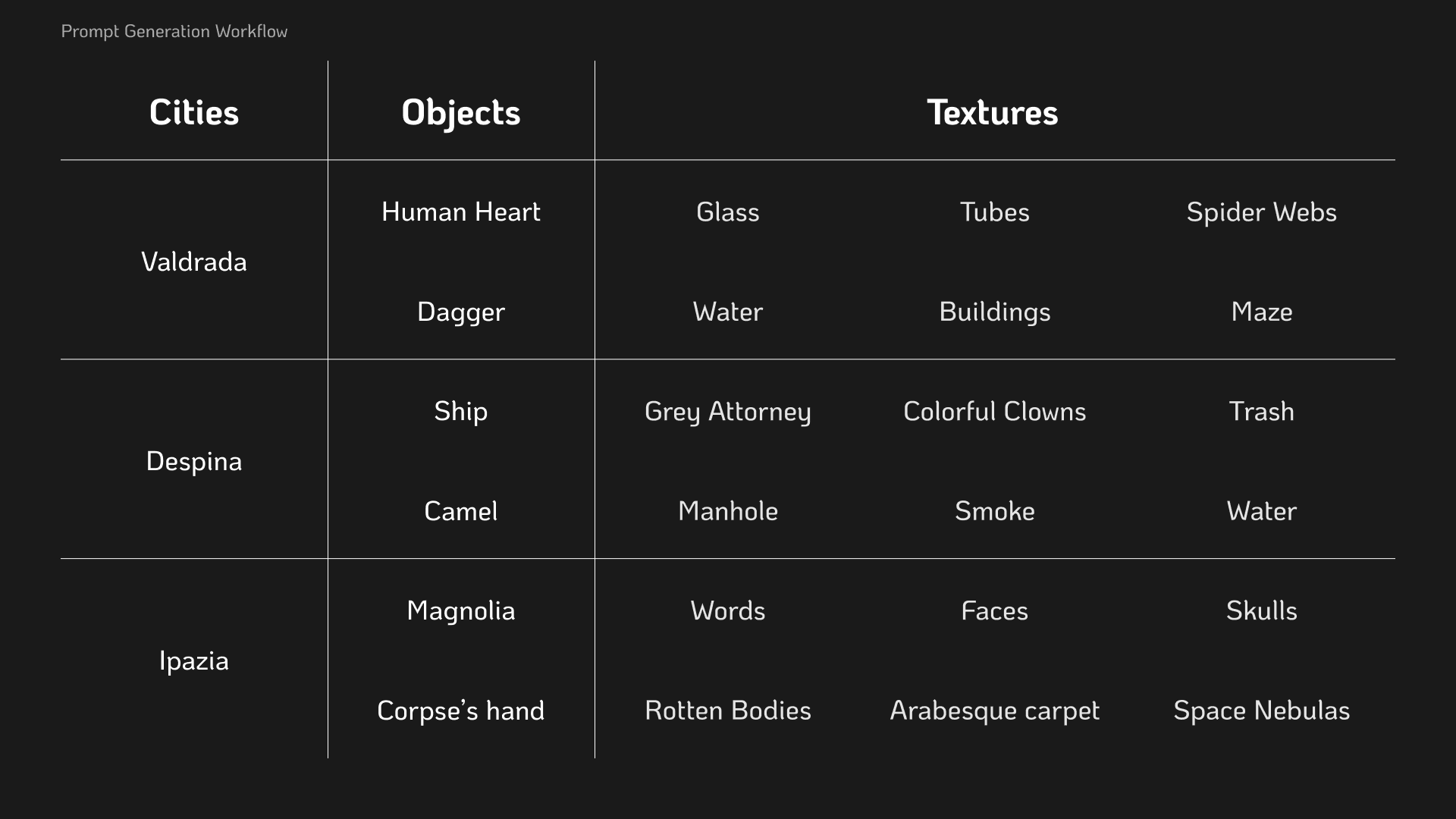

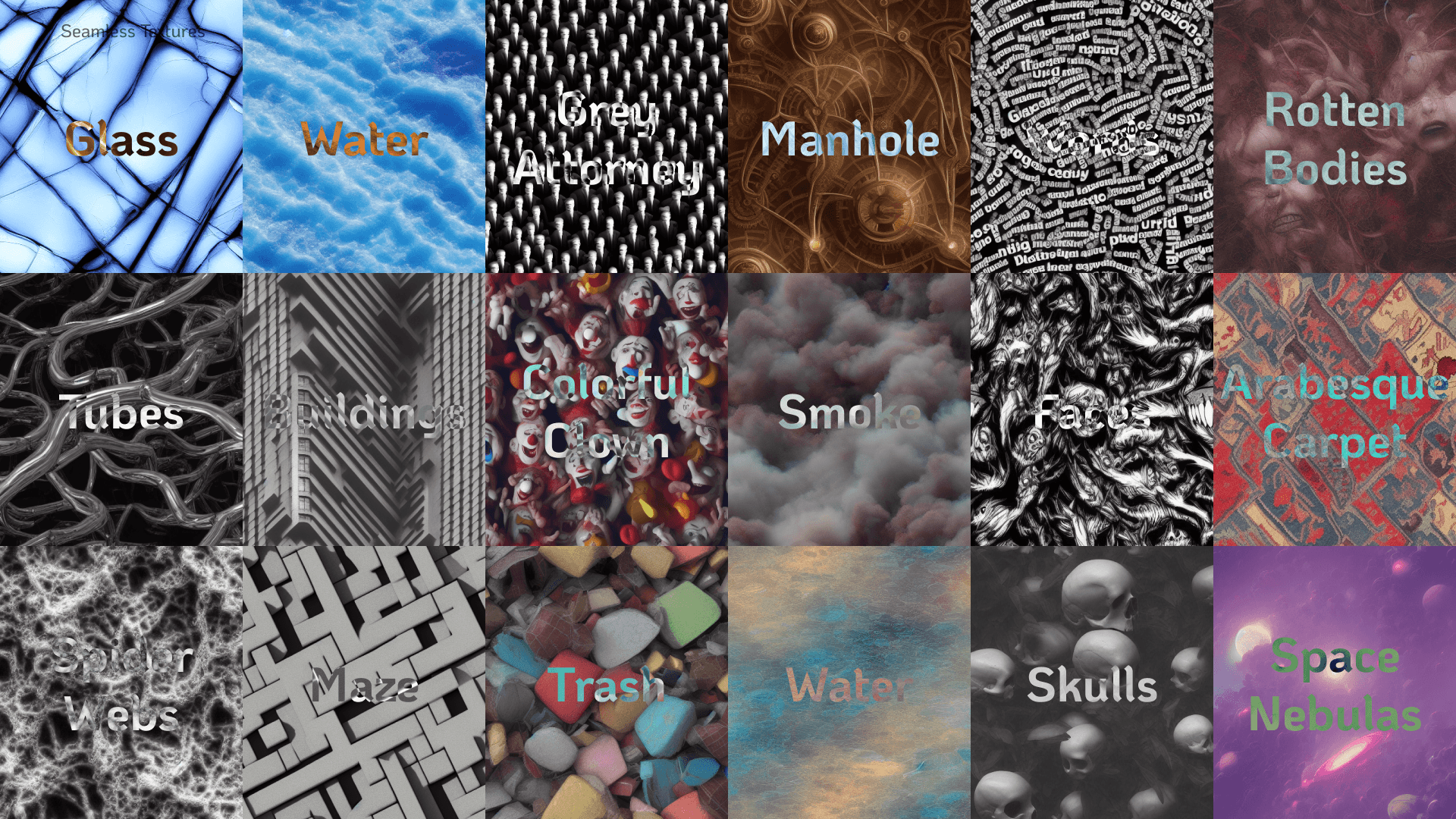

Each 3D Model and Texture come from one of the Invisible Cities. Their progression, alongside the city below the object, is meant to recall, both directly and indirectly, the stories and the world of Calvino’s novel.

Process

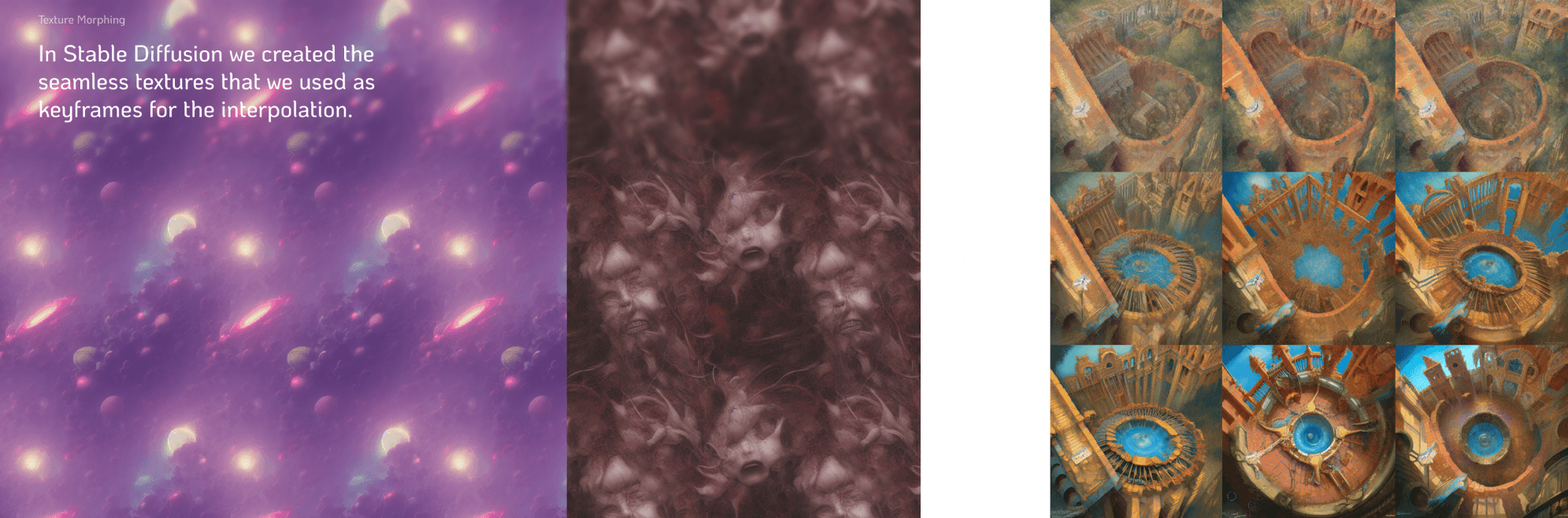

For the images of the cities we tried different models and different CFG scale values (A.I. freedom).

Since these images are meant to be seen from above, we specified for a bird’s eye view.

The renders and the environments are modeled in Blender and refined in Unity to fit the real time limitations of the Quest 2. The metamorphosys was made through Houdini. A node system on Houdini was used to create the morphing. Each 3D model is converted into a voxel alias and the generated volume is then morphed into the shape of the next object. The outputs are re-converted into meshes to regain the initial level of detail and to apply the textures.